Understanding CMX (Context Memory eXtension) in Data Storage for AI

NVIDIA CMX™ (Context Memory eXtension) is an AI-native storage tier engineered to store and manage Key-Value (KV) cache for long-context, multi-turn, and agentic AI inference. By extending GPU memory with a dedicated, pod-level flash tier “G3.5,” CMX prevents the "memory wall" bottleneck, allowing AI models to maintain massive conversational histories and complex reasoning states without exhausting expensive HBM (High Bandwidth Memory).

What is CMX (Context Memory eXtension)?

CMX (formerly known as ICMS or Inference Context Memory Storage) is a specialized storage platform designed to offload and reuse the KV cache generated during AI inference. It sits between fast GPU memory and traditional backend storage, acting as a "pod-level context tier" that enables AI agents to retain long-term memory. NVIDIA has reported up to 5x higher tokens-per-second and 5x better power efficiency for CMX-based inference, though the underlying workload, configuration, and baseline have not been publicly disclosed.2

From ICMS to CMX: The rebranding of AI memory

Originally introduced as ICMS, NVIDIA rebranded the technology to CMX to emphasize its role as a persistent "Context Memory Storage" layer. This shift marks the transition from treating context as a temporary session artifact to treating it as a strategic, reusable asset.2

- Legacy Approach: KV cache was either kept HBM-resident (capacity-limited), offloaded to local SSD (not shareable across the pod), pushed to traditional network storage (contention and tail latency), or simply recomputed (wasted compute, energy, and TTFT).3

- CMX Approach: Context is offloaded to an Ethernet-attached flash tier managed by BlueField-4 DPUs, making it accessible across the entire compute pod.

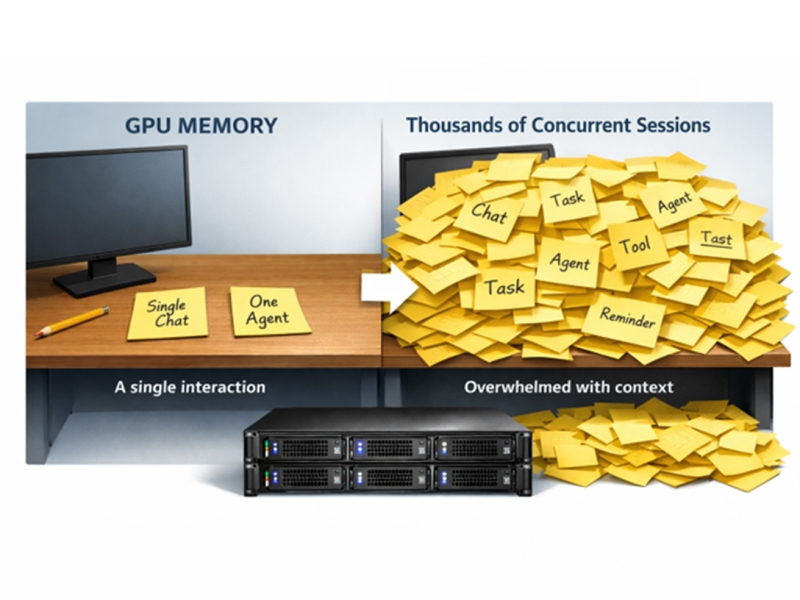

Why CMX is essential for agentic AI

Modern AI "agents" don't just answer questions; they perform multi-step reasoning that requires millions of tokens of context.

- Persistence: Sessions don't "time out" or lose detail when they exceed GPU memory.

- Sharing: Multiple AI agents can access the same context memory pool simultaneously.

- Efficiency: Reusing precomputed KV cache from CMX instead of recomputing it saves massive amounts of compute cycles and power.

How CMX architecture functions

CMX architecture functions as a disaggregated memory tier between Tier 3 and Tier 4, known as Tier G3.5 memory. CMX utilizes NVIDIA BlueField-4 STX data processing units (DPUs) to manage NVMe SSDs over a Spectrum-X Ethernet fabric.

It uses two specialized software tools to manage how memory is moved around behind the scenes. DOCA Memos provides a key-value API that lets inference frameworks read and write KV cache blocks to the CMX tier without involving the host CPU. NIXL (NVIDIA Inference Transfer Library) coordinates the timing, making sure the GPU has the information it needs before it actually asks for it, rather than sitting idle once it does.2

The data being moved is the KV cache, the AI agent’s short-term memory of the current conversation or context. Moving it in pre-organized blocks makes the process more efficient so when the GPU needs to "remember" earlier parts of a conversation, that memory is already waiting, so it doesn't slow down generating the next result.

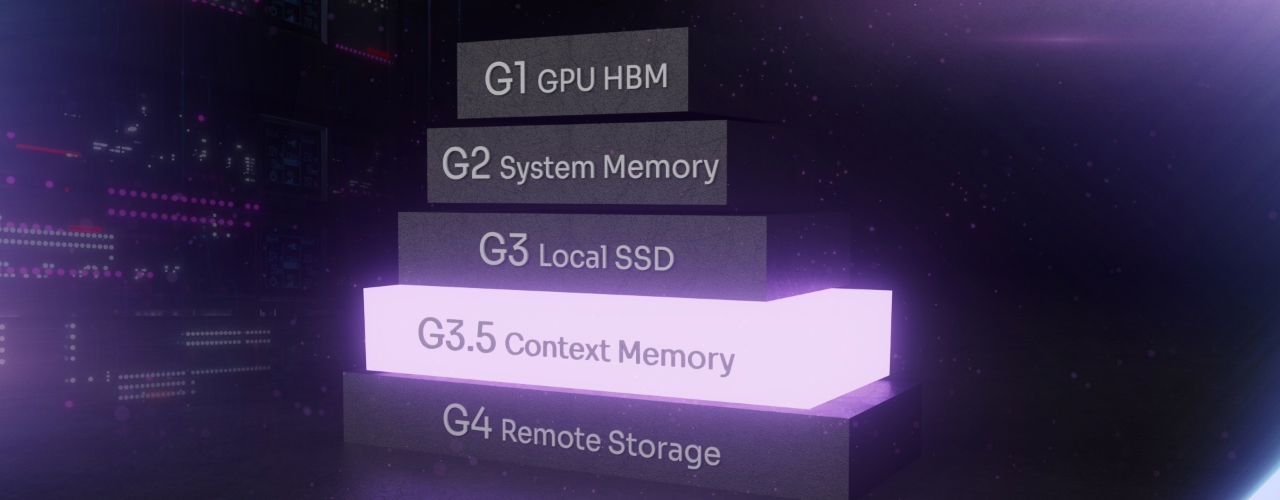

The memory hierarchy with CMX

CMX introduces a new layer into the standard data center stack:

- Tier 0 memory: Fastest memory, located directly inside the GPU. Extremely low latency, very small capacity, used for immediate active computations.

- Tier 1 memory: High Bandwidth Memory (HBM) co-packaged with the accelerator (e.g., Blackwell, Rubin) provides the bandwidth needed to feed compute cores during inference.

- Tier 2 memory: DRAM/Host Memory (Grace/Vera CPU), high-speed system RAM (Grace/Vera CPU), is used for short-term spillover. It’s higher capacity than HBM and higher latency as well.

- Tier 3 memory: Local SSD Node-local NVMe flash. Extends capacity beyond DRAM but is not shareable across the pod.

- Tier 3.5 memory: CMX (The G3.5 Tier) is Ethernet-attached flash optimized for hot, reusable KV cache. Accessible across the entire compute pod via BlueField-4 and Spectrum-X, so the GPU does not have to recompute context for the next step.3

- Tier 4 memory: Storage, usually NVMe/Flash, is used for long-term storage, data lakes, and cold data archiving.

The role of BlueField-4 and DOCA Memos

- BlueField-4 DPU acts as the "storage brain" for CMX. It offloads data integrity, encryption, and KV cache routing from the GPU, enabling compute resources to focus entirely on token generation.

- DOCA Memos provides the simplified Key-Value API that allows applications to interact with this storage as if it were a local cache.

Key features and capabilities of CMX

CMX delivers enhanced performance through hardware-accelerated KV cache placement, RDMA-based data transfers via Spectrum-X, and seamless orchestration through NVIDIA Dynamo. It’s designed to maximize GPU utilization by eliminating idle time or "stalls" caused by context recomputation, and provides a secure, multi-tenant environment for large-scale enterprise AI factories.

NVIDIA-Reported Performance and Efficiency Gains

| Metric | Traditional Storage | NVIDIA CMX Platform |

| Throughput (TPS) | Baseline (1x) | Up to 5x Higher2 |

| Power Efficiency | Standard | Up to 5x Better2 |

| TTFT Latency | High (Recompute) | Low (Cache Reuse) |

| Scaling Logic | General-purpose | AI-Native (KV-aware) |

KV cache reuse and NIXL

NVIDIA CMX uses NIXL (NVIDIA Inference Transfer Library) to turn Ethernet-attached flash into a context pool, allowing AI to resume complex tasks instantly without re-reading entire datasets. This "instant resume" capability enables truly agentic workflows, where an AI can pause a task, wait for external input, and resume with its full cognitive state intact.

Where CMX fits in the memory hierarchy

To understand the impact of CMX, it is helpful to view it as the "missing link" in the modern data center. Traditionally, a sizable performance gap existed between fast DRAM memory in Tier 3 and Tier 4’s standard network storage. CMX introduces a new specialized layer, referred to as Tier G3.5 memory, designed specifically to handle the "hot" situational data that AI agents need to recall instantly.

The hierarchy is structured to balance speed, capacity, and cost, with each tier increasing in both size and latency. By inserting CMX into this hierarchy, NVIDIA allows the GPU to offload the "state" of a conversation to a cost-effective flash tier. When the user returns to the chat or the agent moves to the next step of a task, the NIXL (NVIDIA Inference Transfer Library) pulls that specific memory back into the GPU instantly, bypassing the need for expensive recomputation.2

Use cases for CMX in the modern enterprise

CMX is the foundational infrastructure for long-context reasoning, "instant resume" sessions, and multi-agent collaboration. It is ideal for enterprises deploying trillions of parameters across billions of tokens, where maintaining a persistent, high-speed memory layer is the only way to scale without incurring prohibitive costs or latency.

Multi-turn agentic reasoning

In complex legal or medical analysis, an agent may need to "remember" thousands of pages of documentation across days of interaction. CMX ensures this context is "pre-staged" to the GPU the moment the user interacts, making the AI agent feel responsive and deeply knowledgeable.

High-concurrency AI factories

For organizations with thousands of concurrent users, CMX prevents the "memory wall" from crashing the system. By offloading KV cache to the CMX tier, the system can support more users per GPU, significantly lowering the Total Cost of Ownership (TCO).

Implementation and ecosystem

Implementing CMX requires NVIDIA's BlueField-4 STX (Storage Technology eXtensions) processor as the foundation, paired with E3.S NVMe SSDs in liquid-cooled JBOF enclosures. NVIDIA provides the modular reference architecture; manufacturing and storage partners build the platforms. Compute pods access CMX over Spectrum-X Ethernet using RDMA, and KV cache movement is orchestrated by NVIDIA Dynamo with DOCA Memos handling the I/O plane on BF4.

The ecosystem of CMX enclosures

NVIDIA does not build the SSD enclosures themselves. The STX reference architecture is implemented by partners across several layers with platforms beginning to ship in the second half of 2026.4

- Manufacturing partners (JBOF/platform builders): AIC, Supermicro, and Quanta Cloud Technology (QCT).

- System OEMs: Dell Technologies, HPE, IBM, NetApp, Hitachi Vantara, and Nutanix.

- Storage software providers: VAST Data, WEKA, DDN, MinIO, Cloudian, and Everpure.

- Early adopter cloud providers: CoreWeave, Crusoe, IREN, Lambda, Mistral AI, Nebius, Oracle Cloud Infrastructure, and Vultr.

What Solidigm™ products are the best SSDs for CMX?

The right SSD for a CMX deployment depends on where in the KV cache lifecycle the workload spends most of its time and whether the constraint is latency headroom or rack-level density. Solidigm offers two SSDs that map to opposite ends of this design space.

Solidigm™ D7-PS1010 for the hot, reuse-dominant context tier

The Solidigm D7-PS1010 is a PCIe Gen5 TLC NVMe SSD built for high throughput and predictable latency under sustained, real-world inference load. In long-context reasoning, multi-turn agentic sessions, and high-concurrency pods where stalls translate directly into idle GPU cycles, the D7-PS1010 is the preferred choice. Its performance profile is designed for the latency-sensitive reads that sit on the critical path of token generation, exactly the conditions a pod-level context tier must serve.

Solidigm™ D5-P5336 for capacity-anchored context and warm spillover

The Solidigm D5-P5336 is a high-density QLC NVMe SSD available in capacities up to 122TB. In CMX deployments where the constraint is terabytes-per-rack, the D5-P5336 maximizes density inside tight rack and power envelopes. It also anchors the Tier 4 network storage layer that feeds the CMX tier above, making it a natural fit for organizations building out the full inference storage hierarchy with a single vendor.

Choosing between them

As a general guideline:

- Reuse-heavy, latency-sensitive KV traffic: D7-PS1010

- Capacity-anchored, density-constrained deployments: D5-P5336

- Mixed pods: Both, with D7-PS1010 serving the active CMX tier and D5-P5336 anchoring warm context and the data lake below it

For a deeper discussion of why flash — and specifically these design tradeoffs — is the right answer to the inference memory wall, see the Solidigm article Inference Context Memory Storage (ICMS): Why AI Inference is Becoming a Problem Only Flash Can Solve.

The future of AI rests on Context Memory Storage

The transition from the traditional 4 tier memory hierarchy to CMX represents a pivotal shift in how the industry handles the "memory" of artificial intelligence. By moving beyond the limitations of traditional GPU VRAM and system DRAM, CMX provides the high-bandwidth, low-latency foundation required for the next generation of agentic AI.

As models evolve to handle trillions of parameters and millions of tokens in a single session, the ability to store and reuse Key-Value cache effectively is no longer an optimization; it is a requirement. CMX ensures that AI factories can scale to meet this demand with 5x better power efficiency2 and significantly higher throughput, effectively breaking the "memory wall" that has previously constrained long-context reasoning.

For enterprises building AI at the edge of innovation, CMX is the cognitive infrastructure that transforms a stateless chatbot into a persistent, reasoning tool. By integrating this specialized "G3.5" tier into the data center stack, organizations can finally deliver AI experiences that are as deeply contextual as they are computationally powerful.

FAQs

No, CMX is much more than hardware. While CMX uses NVMe SSDs like the Solidigm D7-PS1010 or D5-P5336, the "magic" lies in the BlueField-4 DPU and the software stack (DOCA Memos, NIXL, and Dynamo). This combination allows the system to understand the specific structure of KV cache and move it between GPUs and storage without involving the host CPU, which traditional SSDs cannot do.

GPUs often sit idle while waiting for context data to be recomputed or fetched from slow storage. CMX keeps this data in a "hot" tier that is specifically tuned for AI workloads. By reusing precomputed KV cache, the GPU spends less time on redundant work and more time generating new tokens.

NVIDIA categorizes data center memory into tiers. G1 and G2 are on-chip and on-node memory. G3 is traditionally local DRAM. CMX creates "G3.5" as a new category of Ethernet-attached, pod-level context memory that is faster and more efficient than traditional networked storage (G4) but more scalable than local SSDs.

The rebranding to CMX was likely done for clarity and market alignment. "Context Memory Storage" or "Context Memory eXtension" is a more descriptive term for the technology's role in the AI stack, highlighting that it is a specialized storage platform for the "memory" of AI models rather than just a management system.

Yes, CMX is built to run on the NVIDIA Spectrum-X Ethernet platform. This is critical because it uses RDMA (Remote Direct Memory Access) to transfer data with zero-copy efficiency. Without the low-latency, lossless fabric of Spectrum-X, the performance benefits of the CMX tier would be bottlenecked by network jitter.

Currently, CMX is a full-stack NVIDIA solution. It is designed to work within the Vera Rubin and Blackwell platforms, leveraging NVIDIA-specific libraries like NIXL and DOCA. It is tightly integrated with the NVIDIA ecosystem to provide the sub-millisecond latency required for inference.

Data in the CMX tier is treated as "ephemeral context." Depending on the policy set in the orchestration layer (like NVIDIA Dynamo), the context can be cached for future reuse, moved to cold storage for long-term archiving, or deleted to free up space for new sessions.

Recomputing a million tokens of context every time a user asks a new question consumes a massive amount of electricity. By storing the precomputed state in CMX and simply "reading" it back, the system uses significantly less power than it would by running the full inference calculation again.

NVIDIA CMX and the underlying BlueField-4 STX architecture are expected to be available through hardware and storage partners starting in the second half of 2026. Major vendors like AIC, Supermicro, and QCT have already showcased the first CMX-compatible storage servers.

About the Authors

Jeff Harthorn is AI Applied Research Lead at Solidigm. His work focuses on the relationship between AI workloads and storage architecture, with an emphasis on inference, context memory, and data pipeline design. Jeff combines applied research, benchmarking, and technical storytelling to turn complex infrastructure topics into actionable insights for customers, collaborators, and senior leadership. He holds a Bachelor of Science in Computer Engineering from California State University, Sacramento.

Cecily Whiteside is Search and Content Specialist at Solidigm. She writes for technology, lifestyle, and health & wellness websites and publications. Cecily has been managing editor at several magazines and contributed as a writer and photographer in others, both in the US and abroad.

References:

- NVIDIA CMX Context Memory Storage Platform; NVIDIA (https://www.nvidia.com/en-us/data-center/ai-storage/cmx/)

- Introducing NVIDIA BlueField-4-Powered CMX Context Memory Storage Platform for the Next Frontier of AI; NVIDIA. (https://developer.nvidia.com/blog/introducing-nvidia-bluefield-4-powered-inference-context-memory-storage-platform-for-the-next-frontier-of-ai/)

- Inference Context Memory Storage (ICMS): Why AI inference is becoming a problem only flash can solve; Solidigm. (https://www.solidigm.com/products/technology/icmsp-ai-inference-is-flash-storage-problem.html)

- NVIDIA Vera Rubin Opens Agentic AI Frontier; NVIDIA Newsroom. (https://nvidianews.nvidia.com/news/nvidia-vera-rubin-platform)