Liquid Cooling Basics

Overview of liquid-cooling for data center components

AI training, inference, real-time analytics, and increasingly dense hardware architecture are rewriting what “normal power range” looks like in the data center. Racks that once sat comfortably in the 5 kW to 15 kW range are now being designed for 30 kW, 60 kW, and beyond. As power density climbs, the old assumptions about airflow, fan walls, hot aisles, and raised floors start to break down. At a certain point, it’s not just hard to cool with air, it becomes inefficient, space-consuming, and operationally fragile.

That’s why liquid cooling has moved from a niche option to a central infrastructure conversation. Data center liquid cooling isn’t one single product or architecture; it’s a set of techniques that move heat using liquid’s superior thermal capacity, making it possible to support high density data center cooling without turning the entire facility into a wind tunnel.

What is liquid cooling?

Liquid cooling is a method of removing heat by circulating a liquid coolant through or near heat-generating components, then rejecting that heat elsewhere (often via a heat exchanger) where it can be dissipated more efficiently. In a data center context, it generally means capturing heat close to where it is produced—inside the rack, inside the server, or directly at the chip—so the facility doesn’t need to rely solely on moving huge volumes of chilled air.

Liquid cooling’s key advantage is physics. Compared to air, liquid can absorb around 3200x more heat per unit volume.1 That means you can move the same amount of heat with:

- Lower flow volume

- Smaller temperature differentials in the “room”

- Less fan energy

- Less reliance on extreme airflow management

In practical terms, liquid cooling in data centers typically follows a heat-removal chain like this:

- Heat is captured at the chip, at the server, or at the rack

- Heat is transported via coolant loop(s)

- Heat is rejected to a facility water loop, dry cooler, cooling tower, or chiller plant, depending on design

Because there are multiple ways to “capture” heat, liquid cooling technology shows up in several architectures, some incremental (like rear-door heat exchangers), some transformative (like immersion). Data centers can adopt liquid cooling solutions gradually, or design a fully liquid-ready facility from day one.

Why use liquid cooling?

Data centers adopt liquid cooling for data centers for a mix of performance, economics, and risk-management reasons, especially when scaling AI and HPC.

It supports higher rack densities

Many operators report that once you get past certain system thresholds, air cooling becomes increasingly impractical. Industry commentary commonly points to the difficulty of relying on air alone above ~20 kW per rack, while AI racks can reach far higher ranges.2

It can reduce energy spent on “moving air”

Fans and airflow infrastructure are not free. Even before you consider chillers, air-cooled designs often spend meaningful power pushing air through increasingly restrictive server designs. Liquid cooling can lower fan requirements and help the facility run at warmer setpoints depending on architecture.3

It improves thermal stability

When components run hot, they may throttle, error, or reduce boost behavior. Liquid cooling in data center environments can provide more consistent inlet and component temperatures, helping performance stay predictable.

It opens design options: denser racks, smaller footprints, new facility constraints

If you’re building in space-constrained metros or retrofitting older buildings, liquid cooled servers can be a pathway to modern compute densities without rebuilding the entire HVAC approach.4

What is liquid cooling in data centers?

Liquid cooling in data centers refers to any cooling approach that uses liquid as the primary transport medium for heat removal, rather than relying exclusively on air as the heat transport medium.

In practice, most data center implementations follow one of these patterns:

- Facility-focused liquid integration

The data center uses liquid loops (chilled water, condenser water, warm water) as the backbone, often paired with heat exchangers that support liquid-cooled racks. - Rack-focused liquid cooling

A liquid cooled server rack may incorporate in-row coolers, rear-door heat exchangers, or direct-to-chip manifolds that connect servers to a coolant distribution unit (CDU). - Server/component-focused liquid cooling

The server is designed for direct liquid contact at the hottest components, such as cold plates on GPUs/CPUs and increasingly, purpose-built approaches for SSD liquid cooling in specialized builds.

As AI growth accelerates, sustainability constraints are also shaping design choices. Water availability and cooling method tradeoffs are becoming more visible in public reporting, and operators are exploring closed-loop and lower-water approaches alongside capacity expansion. A new reference to the use of WUE (Water Utilization Efficiency) is now being tracked.5

Types of data center liquid cooling techniques

Rear-door heat exchangers (RDHx)

Rear-door heat exchangers replace the rear door of a rack with a liquid-cooled radiator. Hot exhaust air passes through the door and gets cooled before entering the room.

Where it fits best

- Transitional deployments where you want meaningful heat capture without redesigning servers

- Mixed environments with both air-cooled and liquid-assisted racks

Key things to watch out for

- You’re still moving air through servers, so fan power remains relevant

- You need rack-level plumbing and controls for safe operation

Direct-to-chip (cold plate) cooling

Direct-to-chip is often the “default” picture people have of data center liquid cooling: coolant is routed through cold plates attached to hot components (GPUs, CPUs, SSDs).

This category is especially important for liquid cooling in AI data centers, where GPUs dominate the thermal profile and rack densities can leap well beyond conventional designs with some reaching almost a quarter-million GPUs.6

What makes it compelling

- High heat capture efficiency at the source

- Enables higher density with potentially lower room airflow requirements

Operational considerations

- Plumbing complexity inside the rack

- Leak detection, quick-disconnect reliability, maintenance procedures

Immersion cooling (single-phase and two-phase)

Immersion cooling places servers or components into a dielectric fluid. Heat transfers directly into the fluid, which is then cooled via a heat exchanger.

Why teams choose immersion

- Very high heat removal capacity

- Potentially simpler airflow design with potentially minimal fans

Why teams hesitate

- Serviceability culture shift and the difficulty of handling submerged hardware

- Vendor lock-in concerns and operational learning curve

- Compatibility and material considerations

ASHRAE and industry guidance continue to evolve as these techniques move mainstream, emphasizing reliability, operability, and risk controls as power densities rise.7

In-row and close-coupled liquid cooling

In-row cooling places liquid-cooled units close to the racks, reducing the distance air must travel, thus improving control. It’s often used in high-density zones inside otherwise conventional facilities.

Good for

- Hybrid deployments

- Targeted high-density pods (Pre-engineered, modular data center units that integrate power, cooling and hardware racks designed for rapid deployment)

Facility “warm water” loops and CDUs

Many modern designs use coolant distribution units (CDUs) to isolate the facility loop from the IT loop. This supports increased control, cleanliness, and pressure management, and it can make retrofits more feasible.

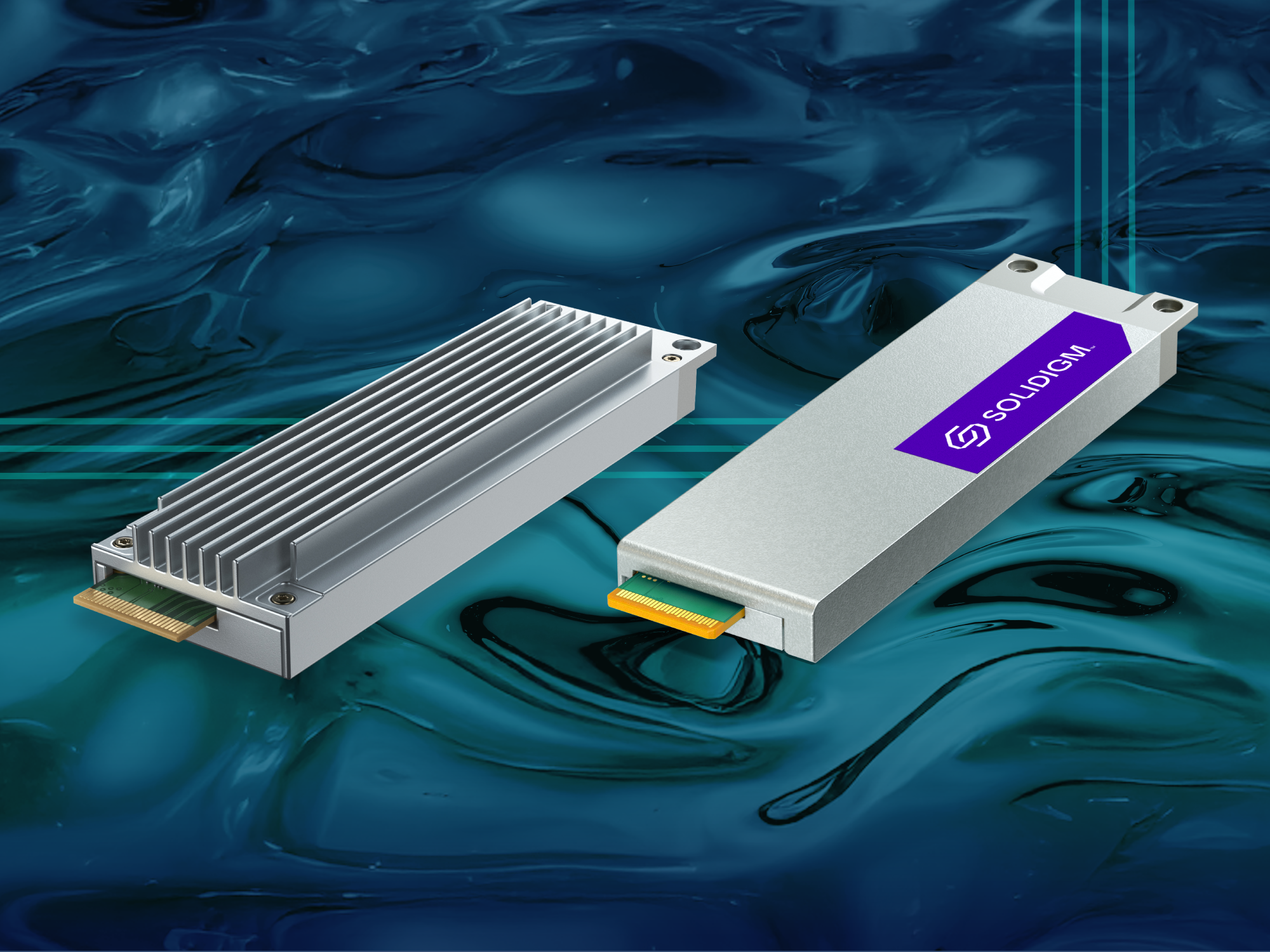

Liquid cooled SSDs: How do they work?

A liquid cooled SSD is not just an SSD with “better heatsinks.” It’s an SSD engineered so liquid cooling interfaces can remove heat effectively without breaking the operational expectations of enterprise storage like hot swap serviceability, predictable fit, and maintainability at scale.

Solidigm has worked with NVIDIA to address SSD liquid-cooling challenges, such as hot swapability and single-side cooling. The Solidigm fully liquid-cooled SSD solution cools both sides of the SSD with a single cold plate and is hot-swappable for space savings and easy maintenance. Find out more about this industry first here.

Liquid cooling vs air cooling

Air and liquid cooling both remove heat from IT equipment, but they do it in fundamentally different ways. Use this comparison to quickly match the cooling approach to your density, reliability, and operational needs.

How each one moves heat

Air cooling

- Uses fans to push cool air through servers and carry heat out into the room

- Relies on room airflow design, such as hot aisle/cold aisle, containment, CRAC/CRAH delivery

Liquid cooling

- Uses a coolant loop to absorb heat close to the source and carry it to a heat exchanger

- Relies on plumbing, CDUs/manifolds, and controlled coolant flow rather than room airflow alone

Where each approach works best

Air cooling

- General-purpose enterprise racks with moderate densities

- Mixed environments where hardware changes frequently

- Facilities optimized around conventional airflow and containment

Liquid cooling

- High-density zones, such as AI, HPC, high-throughput compute/storage clusters

- Racks where thermals are difficult to manage with airflow alone

- Pods designed for repeatable scaling at higher power per rack

What happens as rack density increases

Air cooling

- Requires much higher airflow volume to remove the same heat

- Fan speeds often rise, increasing power overhead and noise

- Small airflow disruptions, such as cabling, missing blanks, and uneven pressure can create hot spots

Liquid cooling

- Heat removal scales with coolant flow and heat exchanger capacity

- Typically maintains more stable component temperatures as density rises

- Room airflow becomes less of the primary constraint although it may still matter for non-liquid-cooled components

What this means for storage and SSDs

Air-cooled storage

- SSDs depend on chassis airflow and heatsinks to stay within spec

- In high-throughput NVMe environments, sustained workloads can heat up drives and increase the chance of throttling if airflow margins are tight

- Hotter air coming off the storage can create challenges to cool components further inside the system before it gets recirculated

SSD liquid cooling

- Drives can be integrated into the liquid-cooled thermal design especially in dense, liquid-oriented server platforms

- Enterprise-friendly implementations focus on keeping data center workflows intact with predictable insertion, hot-swap expectations, and serviceability while improving heat removal for sustained performance

- Removal of fans allows for better system design and still allows for serviceability requirements

Practical takeaway for data center teams

Air cooling tends to fit environments where airflow engineering is already the operational foundation and rack densities remain within established comfort zones.

Liquid cooling tends to fit environments where density targets, AI growth, or platform roadmaps make airflow the bottleneck, and where teams are ready to operate a cooling system that behaves more like infrastructure (loops, controls, procedures) than room-level HVAC alone.

What is hybrid cooling?

Hybrid cooling blends air and liquid approaches in the same facility, row, or even rack—using each where it makes the most sense.

A hybrid cooling system is common when:

- Only a portion of the environment is high density such as AI pods inside a broader enterprise facility

- The organization wants incremental adoption

- Legacy equipment and new liquid-ready platforms must coexist

Common hybrid cooling patterns

- Direct-to-chip liquid for GPUs/CPUs/SSDs, with air still cooling some components

- RDHx on select rows while the rest of the room remains traditional

- Liquid-cooled AI clusters in dedicated rooms, with general-purpose compute remaining air cooled

Hybrid designs can be an excellent “bridge strategy,” but they require clear operational boundaries: maintenance procedures, spare parts, monitoring, and facility coordination need to be documented and adapted.

What are the advantages of liquid cooling for data centers?

Higher density readiness

The rise of AI servers is a major forcing function. Commentary around AI infrastructure frequently cites racks far above traditional levels, with some reporting on extremely high densities for advanced AI systems.

Potential efficiency gains

Depending on architecture, liquid cooling can:

- Reduce server fan power or even allow for removal of fans

- Improve heat rejection efficiency and better thermal windows

- Enable warmer water loops in some designs (supporting economization)

Better control and fewer hot spots

Liquid cooling often provides tighter thermal control at the component level, reducing surprises from airflow turbulence, cable obstructions, and rack-level inconsistencies.

Enables new hardware designs

As platforms evolve, integrating liquid-cooled SSD options can support cohesive system thermal strategies—especially where serviceability is preserved.

What are the disadvantages of liquid cooling for data centers?

Higher upfront complexity and CapEx

Even when the long-term efficiency case is strong, liquid cooling solutions typically require:

- Plumbing infrastructure

- CDUs and heat exchangers

- Monitoring, sensors, and controls

- New commissioning processes

- Understanding of weight and impact of liquid over air

Operational learning curve

Facilities teams, IT teams, and vendors need shared procedures for:

Leak detection and response

Quick-disconnect handling

Preventive maintenance

Coolant quality management

Alignment of component choices for each system

Risk perception around leaks

Modern designs work hard to minimize leak probability and impact, but enterprise teams still need to treat leak scenarios as first-class failure modes and plan accordingly.

Conclusion

Liquid cooling is becoming a defining capability of the modern data center, particularly as AI accelerates rack density, heat flux, and infrastructure stress. But the real story isn’t “liquid replaces air.” It’s that liquid cooling in the data center expands what’s possible: higher densities, more consistent performance, and new platform designs that would otherwise be impractical or inefficient.

And as compute becomes more central in the AI factory conversation, storage is being transitioned to liquid-cooling. The introduction of unique single-sided liquid cooling SSDs by Solidigm™ signals that SSD liquid cooling is not just a lab concept. It’s becoming part of the toolkit for building the next generation of high-density, AI-ready infrastructure.

FAQs

Data centers need liquid cooling when rack density and heat output exceed what airflow can handle efficiently and reliably. Many operators find air-only designs become increasingly difficult past ~20 kW per rack, and AI racks can be far higher than that.

Direct-to-chip uses cold plates to pull heat from specific components (like GPUs, CPUs, and SSDs), while immersion submerges servers or components in dielectric fluid. Immersion can remove large amounts of heat, but it often requires bigger operational changes.

No. While liquid cooling for AI data centers is a major driver today, high-density databases, analytics clusters, and HPC environments also benefit from liquid-based approaches when heat and footprint become constraints.

A liquid cooled server rack is a rack designed to interface with liquid cooling infrastructure—such as rear-door heat exchangers, in-row coolers, or manifolds connected to a CDU—so heat can be removed with liquid rather than relying only on room air.

A liquid cooled SSD is engineered to transfer heat efficiently to a liquid-cooled interface (often a cold plate) while maintaining data center requirements like predictable fit and serviceability. Solidigm has designs that preserve hot-swap behavior while enabling effective liquid heat removal.

In high-performance environments, NVMe SSDs can generate enough heat to matter—especially when airflow is reduced in liquid-optimized servers. SSD liquid cooling helps keep performance stable and supports denser platform designs.

Hybrid cooling combines air and liquid methods in the same environment—for example, liquid on GPUs/CPUs in AI racks while the rest of the facility stays air cooled. A hybrid cooling system is often the most realistic adoption path for budget constrained operations.

Data center water use depends on the design. Some cooling systems consume significant water, especially evaporative approaches. Other systems emphasize closed-loop or water-saving designs. With data center water use under increasing scrutiny, teams should consider water and energy conservation early in the design or refit process.

The main operational concerns are leak management, maintenance procedures, coolant quality, and vendor variability. The best mitigations are strategic planning, monitoring, documented processes, and training—not just hardware selection.

Consider a hybrid model with direct-to-chip components, rack-level, or in-row adoption. Define clear success metrics like power density, thermal stability, fan energy reduction, and maintenance impact. Then expand once operational confidence is proven.

About the Author

Cecily Whiteside is Search and Content Specialist at Solidigm. She writes for technology, lifestyle, and health & wellness websites and publications. Cecily has been managing editor at several magazines and contributed as a writer and photographer in others, both in the US and abroad.

References

1) https://spectrum.ieee.org/data-center-liquid-cooling

2) https://www.feace.com/single-post/higher-rack-density-requires-liquid-cooled-servers

3) https://blog.equinix.com/blog/2025/10/01/top-3-myths-about-data-center-operating-temperatures/

4) ref: https://www.solidigm.com/products/technology/edge-ai-seismic-data-processing-immersion-cooling.html

5) https://ambient-enterprises.com/news-insights/why-liquid-cooling-data-center-design-matters/