When AI Runs Out of Memory

SSDs Unlock AI Inference at Scale With RAG and KV Cache Data Offload

If you’ve ever rewatched a favorite episode of a streaming series, you’ve probably appreciated the “Previously on…” recap. Without it, you might have to waste time watching previous episodes for a refresh.

Many AI inference systems don’t get that luxury. When users return to a document or revisit prior context, models often recompute everything from scratch, burning GPU cycles and forcing users to wait tens of seconds for answers they’ve already covered.

At scale, this problem extends beyond the context memory stored in key value (KV) caches to vector databases powering retrieval-augmented generation (RAG), where memory costs balloon just to keep knowledge searchable.

The result is AI that forgets too easily and costs too much to remember. In a new white paper linked here, Solidigm and Metrum AI show how SSDs give AI systems a memory that lasts, without paying a premium for every recall. Assisting our effort was FarmGPU, a data center infrastructure provider with deep storage expertise.

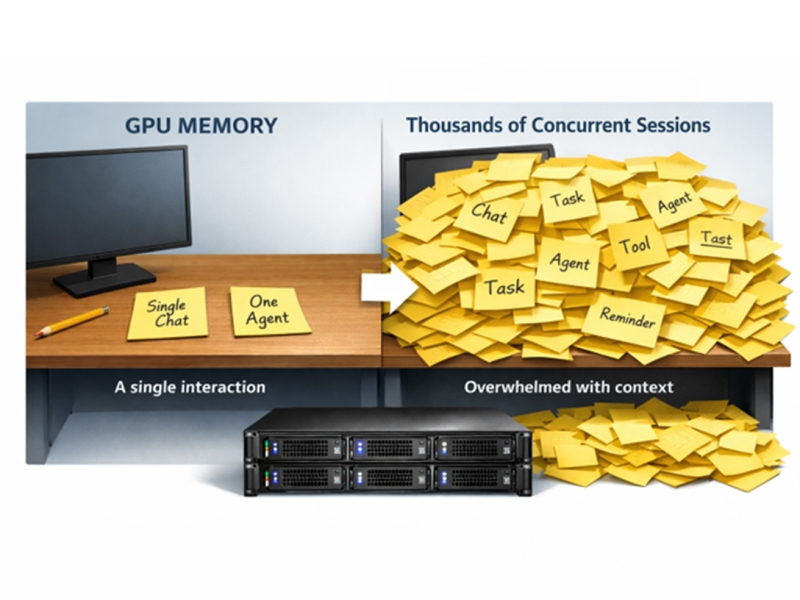

The memory wall in modern AI

AI inference pipelines are growing in two directions at once. On one side, RAG applications rely on massive vector databases that can reach hundreds of millions of embeddings. On the other, large language models are processing ever longer contexts, generating enormous KV caches that must stay accessible for fast response times.

Traditionally, both problems have been addressed with more DRAM and more GPU memory. But that approach is running into hard economic and physical limits.

In our research, we observed that in-memory vector indexing at 100 million vectors required more than 300 GB of DRAM. At the same time, a single long-context document, comprising around 180,000 tokens, can consume over 90% of GPU VRAM, leaving little room for concurrency or reuse.

The result is a system that scales in cost faster than it scales in value.

Offloading vector search: SSDs change the economics of RAG

One of the clearest findings in the study is how SSD-offloaded vector retrieval fundamentally changes the cost-performance curve for RAG.

By using DiskANN indexing with vector data stored on Solidigm™ D7-PS1010 SSDs, the tested system reduced DRAM requirements at 100 million vectors from 331GB down to 116GB, a 65% reduction, without sacrificing accuracy. In fact, recall remained at or above 99% even at large scale.

Performance improved as well. At production-representative concurrency (1,024 threads), SSD-offloaded DiskANN delivered 14% higher query throughput than DRAM-bound HNSW indexing, along with lower average and tail latency. This challenges the long-held assumption that moving data to storage necessarily means slower results.

For RAG pipelines, the implication is powerful. Organizations can grow their knowledge bases from millions to hundreds of millions of vectors simply by adding SSD capacity rather than by redesigning servers around ever larger memory footprints.

KV cache offload: Making long-context AI practical

Vector search is only half of the story. The other major bottleneck in AI inference is the KV cache generated during attention computation in large language models.

Without offload, switching back to a previously analyzed document forces the system to recompute the entire KV cache, potentially taking 30 to 40 seconds before the first token is returned in the case of a big prompt. That delay breaks investigative and analytical workflows, especially when users move back and forth between documents.

The white paper shows how extending the memory hierarchy — keeping active KV data in GPU memory and additional context on Solidigm SSDs — dramatically changes this experience.

When cached KV tensors were retrieved from SSD instead of recomputed, time to first token dropped from ~39 seconds to just 1.43 seconds, a 27× improvement. Models no longer reprocess the same context twice, and GPUs spend their time generating insight rather than repeating work they’ve already done.

Just as importantly, this approach avoids the need to scale GPU VRAM for workloads that are memory bound. SSDs provide a far more cost-effective tier for persistence and reuse.

Performance, predictability, and cost — together

Across both vector retrieval and KV cache offload, the data points to a consistent conclusion: SSDs are no longer just a capacity tier. With the right software architecture, they become an active performance layer in AI inference.

In synthetic and production benchmarks alike, SSD-offloaded designs delivered:

- 23–26% higher query throughput across datasets from 1M to 100M vectors

- Up to 65% lower DRAM consumption at large scale

- More than 2× improvement in queries per dollar at the infrastructure level

For enterprises building AI systems meant to last, this matters. Memory prices are only going in one direction in 2026. GPU resources are precious. Architectures that assume everything must live in DRAM or VRAM simply don’t scale economically.

A new role for storage in AI

The takeaway is compelling. Large-scale AI inference systems no longer just benefit from high-performance SSDs, they require them.

By offloading RAG data structures and KV caches to high-performance NVMe SSDs, organizations can break through memory barriers that limit scale, responsiveness, and ROI. The result is AI infrastructure that grows with demand, delivers consistent performance, and makes better use of every compute dollar.

By letting AI remember instead of re-learn, SSDs turn inference into a true “Previously on…” experience where context is restored in seconds, GPUs stay productive, and insight doesn’t have to start from scratch.

Read the Metrum AI White Paper here: Breaking Memory Barriers in Video Analytics White Paper

About the Author

Ace Stryker is Director of AI & Ecosystem Marketing at Solidigm where he focuses on emerging applications for the company’s portfolio of data center storage solutions.