What NVIDIA GTC ’26 Made Clear: AI’s Next Leap Depends on Storage

If there was one underlying theme across NVIDIA GTC ‘26 in San Jose, CA this March, it wasn’t bigger models or flashier demos. It was this:

AI progress is no longer gated by compute alone. It’s increasingly gated by how fast and how efficiently you can move and store data.

Here are five takeaways that matter whether you’re building new AI systems or scaling your current systems.

1. Your GPUs are only as good as your data pipeline

We’ve officially entered the era of accelerators worth tens of thousands of dollars sitting idle, not because they lack compute, but because they’re waiting on data.

Modern GPUs like those from NVIDIA are engineered for massive parallelism, but they’re only effective when they are continuously fed with high-throughput, low-latency data streams.

A starved accelerator isn’t just inefficient. It’s expensive. This is where storage architecture becomes a first-class concern:

- High-performance NVMe tiers

- Parallel file systems

- Data locality strategies

According to NVIDIA, end-to-end system balance is critical to achieving full GPU utilization.1 In practice, this means storage is now an integral part of your compute strategy, rather than an afterthought.

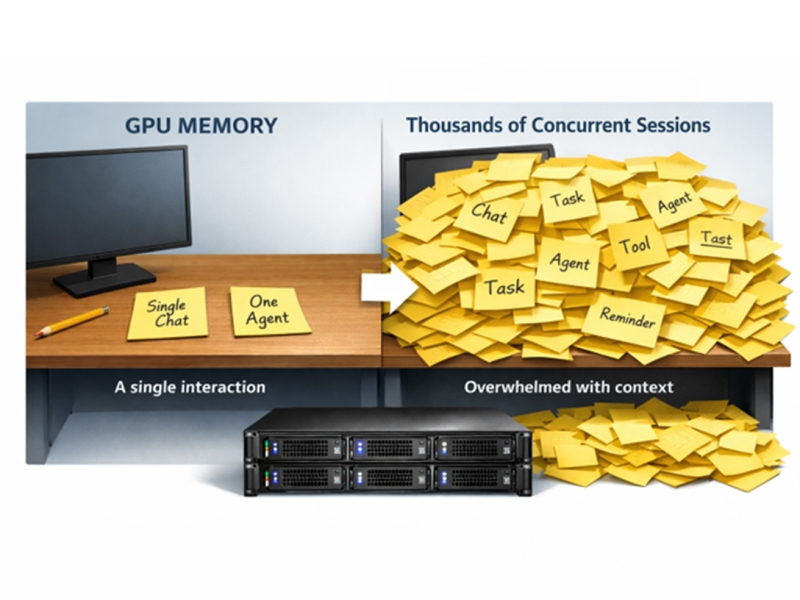

2. AI is evolving fast, data is growing even faster

The evolution of AI applications has been happening in waves:

- 2024: Chatbots

- 2025: Copilots

- 2026: Autonomous agents

Each step up the stack increases not just value, but data intensity.

Agents don’t just respond. They plan, iterate, call tools, and generate sophisticated outputs. That means a single user interaction can explode into dozens or even hundreds of model operations.

Industry research from firms like Gartner has already highlighted that agent-based systems will dominate enterprise AI adoption this decade, and those systems are fundamentally more data-hungry. 2

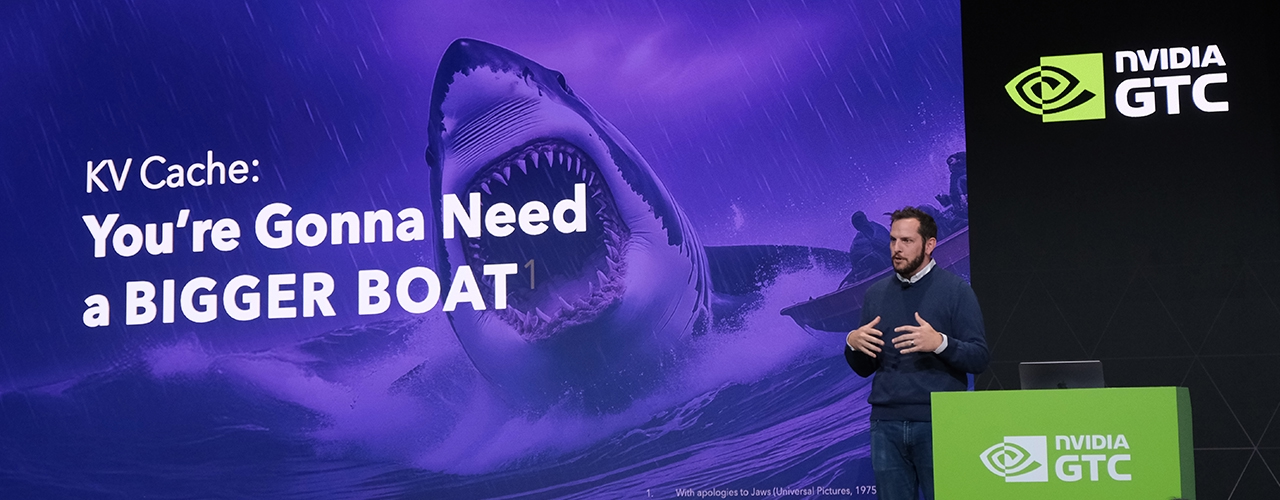

3. Inference is becoming the new data tsunami

Training used to dominate infrastructure conversations. Not anymore. Inference is now the primary driver of scale, and it’s getting weightier:

- Larger context windows. Read more about it in this article: Inference Context Memory Storage (ICMS): Why AI inference is becoming a problem only flash can solve

- Retrieval-augmented generation (RAG). Read more about it in this article: Unlocking AI Scale With SSD Offload Techniques

- Multi-step agent workflows.

A single prompt doesn’t stay a single prompt. It branches. And all of that requires fast access to large working sets of data. DRAM alone can’t keep up. It’s too expensive and too limited in capacity. That’s why we’re seeing a shift toward tiered memory architectures, where high-performance SSDs extend effective memory capacity.

Companies like NVIDIA have emphasized the role of storage in enabling scalable inference, especially as models rely more heavily on external data.

4. Tokens per watt is the metric that actually matters

There’s a quiet shift happening in how AI success is measured. It’s not just tokens per second. It’s tokens per watt.

Why? Because power is a hard constraint. Running large-scale AI systems is expensive not just in terms of hardware, but in terms of energy. Inefficient systems don’t scale economically and improving efficiency isn’t always about new chips. It’s often about increasing storage density, minimizing idle compute cycles, and reducing data movement.

As highlighted across infrastructure discussions at NVIDIA GTC, efficiency is now a system-wide problem, not just a silicon problem.

5. AI will be both centralized and distributed: Storage enables both

There’s a false dichotomy in AI architecture conversations, that of centralized vs. edge. The reality is that you often need both.

Centralized AI factories handle model training, large-scale inference, and data aggregation. Edge deployments, on the other hand, are relied on for real-time decision making, latency sensitive workloads, and applications with high data sovereignty requirements.

What connects them both? Storage

Both AI factories and edge deployments require high throughput, capacity, and scalability. Without ongoing breakthroughs in storage performance and density, neither can reach its full potential.

Final thoughts

AI innovation is accelerating as the limiting factor is shifting. In 2026, it’s not compute. It’s not models. It’s data infrastructure.

That’s where Solidigm comes in. We are powering the next generation of AI with industry-leading, high-capacity storage built for performance, efficiency, and scale. Because in the end, the future of AI doesn’t just depend on how fast you can think. It depends on how fast you can feed the machine.

About the Author

Ace Stryker is Director of AI & Ecosystem Marketing at Solidigm where he focuses on emerging applications for the company’s portfolio of data center storage solutions.

References