Anatomy of a Prompt

Reframing "prompt engineering" as an infrastructure discipline

Prompt structure systematically generates state objects that influence storage architecture and the quality-of-service requirements for LLM inference and RAG.

This white paper provides a mapping artifact that connects prompt layers to state objects (KV cache blocks, retrieval payloads, and tool artifacts), I/O patterns, dominant SLOs, and tier placement decisions, reframing "prompt engineering" as an infrastructure discipline.

Two procurement-grade implications follow:

- Long context and agentic workflows turn "GB per session" into "TB per fleet" through KV scaling under concurrency, and

- RAG behaves like a low-queue-depth random-read workload whose p99/p99.9 latency, not headline IOPS at high queue depth, gates end-user time-to-first-token.

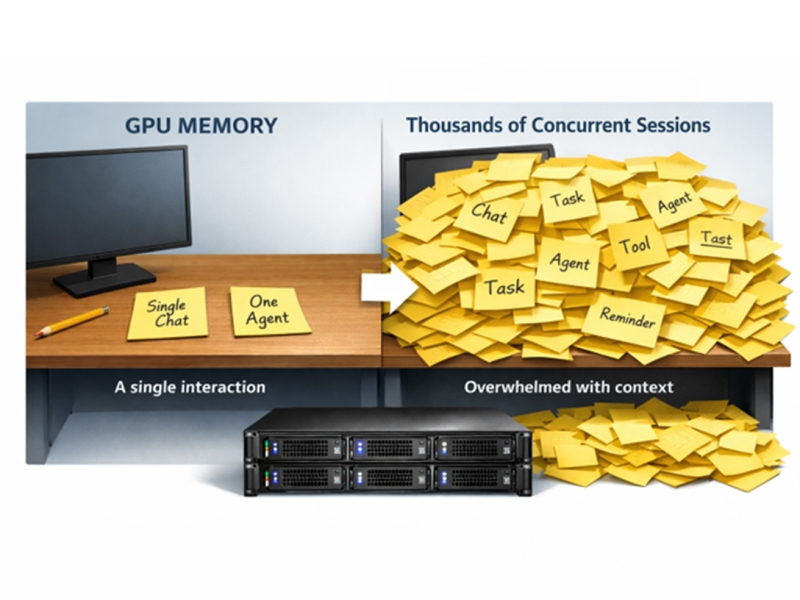

A production prompt is a compiled context bundle: policies, templates, user intent, session history, retrieved evidence, tool schemas, and tool outputs. For a given model and serving stack, prompt structure determines which state objects are instantiated (KV cache blocks, retrieval payloads, tool artifacts) and how they scale.

Those state objects impose measurable I/O shapes and QoS requirements. Context windows are expanding (some models expose 1M-token windows), increasing prefill work and state footprint.1 Long context and agentic loops increase state; concurrency multiplies it.

- KV cache becomes the dominant in-flight working set and a first-order limiter for TTFT, ITL stability, and cost,2,3,4

- RAG behaves like a low-queue-depth random-read workload under concurrency.

- Procurement success is defined by p99/p99.9 latency at QD1–4 under realistic concurrency and mixed load, not peak IOPS or peak GB/s at high queue depth.5,6,7

If your prompts include large stable prefixes, grounding rules, tool schemas, and retrieval payloads, you are implicitly choosing a tiering design and a tail-latency requirement under concurrency.

Read the full white paper below to learn more.

About the Author

Jeff Harthorn is AI Applied Research Lead at Solidigm. His work focuses on the relationship between AI workloads and storage architecture, with an emphasis on inference, context memory, and data pipeline design. Jeff combines applied research, benchmarking, and technical storytelling to turn complex infrastructure topics into actionable insights for customers, partners, and senior leadership. He holds a Bachelor of Science in Computer Engineering from California State University, Sacramento.

References

1) Google, “Long context,” Gemini API documentation (Google AI for Developers). Available: https://ai.google.dev/gemini-api/docs/long-context (accessed Feb. 24, 2026). (Google AI for Developers)

2) NVIDIA, “Accelerate Large-Scale LLM Inference and KV Cache Offload with CPU-GPU

Memory Sharing,” NVIDIA Technical Blog (Sep. 5, 2025). Available: https://developer.nvidia.com/blog/accelerate-large-scale-llm-inference-and-kv-cache-offload-with-cpu-gpu-memory-sharing/ (accessed Feb. 24, 2026). (NVIDIA Developer)

3) Hugging Face, “KV cache strategies,” Transformers documentation (v4.55.4). Available: https://huggingface.co/docs/transformers/v4.55.4/en/kv_cache (accessed Feb. 24, 2026). (Hugging Face)

4) W. Kwon, et al., “Efficient Memory Management for Large Language Model Serving with PagedAttention,” arXiv:2309.06180. Available: https://arxiv.org/abs/2309.06180 (accessed Feb. 24, 2026). (arXiv)

5) J. Dean and L. A. Barroso, “The Tail at Scale,” Communications of the ACM, vol. 56, pp. 74–80 (2013). Available: https://research.google/pubs/the-tail-at-scale/ (accessed Feb. 24, 2026). (Google Research)

6) Intel, “Performance Benchmarking for PCIe* and NVMe* Enterprise Solid-State Drives,” White Paper (Feb. 2015). Available: https://www.intel.com/content/dam/www/public/us/en/documents/white-papers/performance-pcie-nvme-enterprise-ssds-white-paper.pdf (accessed Feb. 24, 2026). (intel.com)

7) S.-H. Cho, et al., “Efficient Garbage Collection Algorithm for Low Latency SSD,” Electronics, vol. 11, no. 7, 1084 (2022). Available: https://www.mdpi.com/2079-9292/11/7/1084 (accessed Feb. 24, 2026). (MDPI)